disaster recovery plan (DRP)

What is a disaster recovery plan (DRP)?

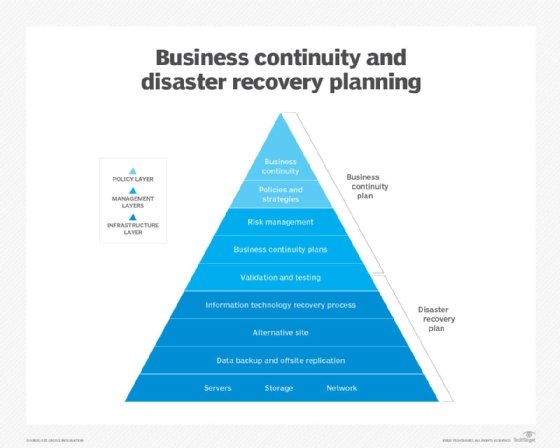

A disaster recovery plan (DRP) is a documented, structured approach that describes how an organization can quickly resume operations after an unplanned incident. A DRP is an essential part of a business continuity plan (BCP). It's applied to the aspects of an organization that depend on a functioning IT infrastructure. A DRP aims to help an organization resolve data loss and recover system functionality to perform in the aftermath of an incident, even if it operates at a minimal level.

The plan consists of steps to minimize the effects of a disaster so the organization can continue to operate or quickly resume mission-critical functions. Typically, a DRP involves an analysis of business processes and continuity needs. Before generating a detailed plan, an organization often performs a business impact analysis (BIA) and risk analysis and it establishes recovery objectives.

As cybercrime and security breaches become more sophisticated, organizations must define their data recovery and protection strategies. The ability to quickly handle incidents can reduce downtime and minimize financial and reputational damages. DRPs also help organizations meet compliance requirements while providing a clear roadmap to recovery.

Brief History of DRP

DRP has evolved significantly over the years and has been shaped by various factors, such as technological progress, regulatory demands and the rise of cloud computing.

- Late 1970s. Most businesses started relying heavily on computer information systems. This led to the development of disaster recovery plans.

- 1983. A crucial step toward formalizing disaster recovery planning was taken when U.S. legislation required national banks to create verifiable backup plans.

- Early 1990s. The Disaster Recovery Institute broadened its flagship certification from disaster recovery to business continuity, acknowledging the increasing importance of comprehensive planning that goes beyond IT systems.

- Present. The rise of cloud services and computing has enabled businesses to delegate their DRPs to third-party providers, leading to the development of disaster recovery as a service (DRaaS). This approach readily accommodates business growth and offers advantages regarding recovery times, flexibility and cost.

What is considered a disaster?

A disaster is an event that severely affects the normal operations of a business or organization. Disasters can encompass a wide range of events, including natural phenomena such as earthquakes and floods, as well as man-made incidents, including cyberattacks and industrial accidents.

Types of disasters that organizations can plan for include the following:

- Application failure.

- Communications failure.

- Power outage.

- Natural disaster.

- Malware or other cyberattack.

- Transportation accidents.

- Network outages.

- Data center disaster.

- Building disaster.

- Campus disaster.

- Citywide disaster.

- Regional disaster.

- National disaster.

- Multinational disaster.

Recovery plan considerations

When disaster strikes, the recovery strategy should start at the business level to determine which applications are most important to running the organization. The recovery time objective (RTO) describes the amount of time the critical applications can be down, typically measured in hours, minutes or seconds. The recovery point objective (RPO) describes the age of files that must be recovered from data backup storage for normal operations to resume.

Recovery strategies define an organization's plans for responding to an incident, while disaster recovery plans describe how the organization should respond. Recovery plans are derived from recovery strategies.

In determining a recovery strategy, organizations should consider the following issues:

- Budget.

- Insurance coverage.

- Resources -- people and physical facilities.

- Management team's position on risks.

- Technology.

- Data and data storage.

- Suppliers.

- Compliance requirements.

Management approval of recovery strategies is important. All strategies should align with the organization's goals. Once DR strategies have been developed and approved, they can be translated into disaster recovery plans.

Types of disaster recovery plans

DRPs can be tailored for a given environment. Specific types of plans include the following:

- Virtualized disaster recovery plan. Virtualization gives organizations opportunities to execute DR more efficiently and easily. A virtualized environment can spin up new virtual machine instances within minutes and provide application recovery through high availability. Testing is also easier, but the plan must validate that applications can be run in DR mode and returned to normal operations within the RPO and RTO.

- Network disaster recovery plan. Developing a plan for recovering a network gets more complicated as the complexity of the network increases. It's important to provide a detailed, step-by-step recovery procedure, test it properly and keep it updated. The plan should include information specific to the network, such as its performance and networking staff.

- Cloud disaster recovery plan. Cloud DR can range from file backup procedures in the cloud to complete replication. Cloud DR can be space-, time- and cost-efficient, but maintaining the disaster recovery plan requires proper management. The manager must know the location of physical and virtual servers. The plan must address security, which is a common issue in the cloud that can be alleviated through testing.

- Data center disaster recovery plan. This type of plan focuses exclusively on the data center facility and infrastructure. An operational risk assessment is a key part of a data center DRP. It analyzes key components, such as building location, power systems and protection, security and office space. The plan must address a broad range of possible scenarios.

- DRaaS. DRaaS represents the commercial adaptation of cloud-based disaster recovery. In this model, a third-party service provider undertakes the replication and hosting of an organization's physical and virtual machines. Governed by a service-level agreement, the provider assumes the role of executing the disaster recovery plan during emergencies.

Scope and objectives of DR planning

The main objective of a DRP is to minimize the negative effects of an incident on business operations. A disaster recovery plan can range in scope from basic to comprehensive. Some DRPs can be as much as 100 pages long.

DR budgets vary greatly and fluctuate over time. Organizations can take advantage of free resources, such as online DRP templates, including the Business Continuity Test Template from TechTarget.

Several organizations, including the Business Continuity Institute and Disaster Recovery Institute International, also provide free information and online content.

An IT disaster recovery plan checklist typically includes the following:

- Establishing the range or extent of necessary treatment and activity -- the scope of recovery.

- Gathering relevant network infrastructure documents.

- Identifying the most serious threats and vulnerabilities, as well as the most critical assets.

- Staff members responsible for those systems and networks.

- RTO and RPO information.

- Disaster recovery sites -- such as hot sites, warm sites and cold sites.

- Reviewing the history of unplanned incidents and outages, as well as how they were handled.

- Identifying the current disaster recovery procedures and DR strategies.

- Identifying the incident response team.

- Steps to restart, reconfigure and recover systems and networks.

- Other emergency steps required in the event of a disaster.

- Having management review and approve the DRP.

- Testing the plan.

- Updating the plan.

- Creating a DRP or BCP audit.

The location of a disaster recovery site should be carefully considered in a DRP. Distance is an important, but often overlooked, element of the DRP process. An off-site location that's close to the primary data center might seem ideal -- in terms of cost, convenience, bandwidth and testing. However, outages differ greatly in scope. A severe regional event can destroy the primary data center and its DR site if the two are located too close together.

How to build a disaster recovery plan

The disaster recovery plan process involves more than simply writing the document. Before writing the DRP, a risk analysis and business impact analysis can help determine where to focus resources during the disaster recovery process.

Typically, the following steps are involved in creating a DRP:

- Conduct a BIA. The BIA identifies the effects of disruptive events and is the starting point for identifying risk within the context of DR. It also generates the RTO and RPO.

- Create a risk analysis. The RA identifies threats and vulnerabilities that could disrupt the operation of systems and processes highlighted in the BIA. It assesses the likelihood of a disruptive event and outlines its potential severity.

- Develop a goals statement. A goals statement delineates the objectives an organization aims to accomplish during or after a disaster, encompassing both the RTO and RPO.

- Identify the DRP team. Disaster recovery plans are living documents. Involving employees -- from management to entry-level -- increases the value of the plan. Each disaster recovery plan should outline the individuals tasked with executing it and include measures to use in the absence of key personnel.

- Take inventory of IT. Create an IT inventory list that includes each item's cost, model, serial number, manufacturer and whether it's rented or owned.

- Create an internal communication strategy. Another component of the DRP is the communication plan. This strategy should detail how both internal and external crisis communication will be handled. Internal communication includes alerts that can be sent using email, overhead building paging systems, voice messages and text messages to mobile devices. Examples of internal communication include instructions to evacuate the building and meet at designated places, updates on the progress of the situation and notices when it's safe to return to the building.

- Create an external communication strategy. External communications are even more essential to the BCP and include instructions on how to notify family members in the case of injury or death; how to inform and update key clients and stakeholders on the status of the disaster; and how to discuss disasters with the media.

- Develop a data backup, recovery and redundancy plan. A detailed plan for data backup, system recovery and restoration of operations should be mandated. The plan should also highlight redundancy and failover mechanisms for critical infrastructure and systems.

- Test the DR plan. The DR plan should be regularly tested to pinpoint vulnerabilities and areas of improvement. Training should also be conducted as part of testing to familiarize employees with their roles and responsibilities when dealing with a disaster.

- Regularly review and revise the plan. The disaster recovery plan should be consistently reviewed and revised to account for changes in business technology, operations and potential risk factors.

Disaster recovery plan template

An organization can begin its DRP with a summary of vital action steps and a list of important contact information. That makes the most essential information quickly and easily accessible.

The plan should define the roles and responsibilities of disaster recovery team members and outline the criteria to launch the plan into action. The plan should specify, in detail, the incident response and recovery activities. Once the template is prepared, it's recommended to store it in a safe and accessible off-site location.

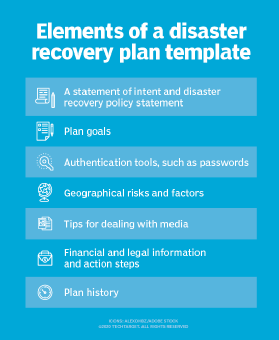

Other important elements of a disaster recovery plan template include the following:

- Statement of intent and DR policy statement.

- Plan goals.

- Authentication tools, such as passwords.

- Geographical risks and factors.

- Tips for dealing with media.

- Financial and legal information and action steps.

- Plan history.

Testing your disaster recovery plan

DRPs are substantiated through testing to identify deficiencies and give organizations the opportunity to fix problems before a disaster occurs. Testing can offer proof that the emergency response plan is effective and hits RPOs and RTOs. Since IT systems and technologies are constantly changing, DR testing also helps ensure a disaster recovery plan is up to date.

Reasons given for not testing DRPs include budget restrictions, resource constraints and a lack of management approval. DR testing takes time, resources and planning. It can also be risky if the test involves using live data.

DR testing varies in complexity. Typically, there are four types of DRP testing:

- Plan review. A plan review includes a detailed discussion of the DRP and looks for missing elements and inconsistencies.

- Tabletop exercise. In a tabletop test, participants walk through disaster scenarios and planned activities step by step to demonstrate whether DR team members know their duties in an emergency. It helps identify gaps in the DR plan and understand how different stakeholders would respond to the situation.

- Parallel testing. Parallel testing involves running both the primary system and the backup or recovery system simultaneously to compare their performance and ensure the effectiveness of the backup system. This test lets organizations assess whether the backup system can handle the workload and maintain data integrity while the primary system is still operational.

- Simulation testing. A simulation test uses resources such as recovery sites and backup systems in what's essentially a full-scale test without an actual failover. Different disaster scenarios are simulated within a controlled environment to verify the effectiveness of the disaster recovery plan and to gauge how quickly an organization can resume business operations after a disaster.

Incident management plan vs. disaster recovery plan

An incident management plan (IMP) -- or incident response plan -- should also be incorporated into the DRP; together, the two create a comprehensive data protection strategy. The goal of both plans is to minimize the negative effects of an unexpected incident, recover from it and return the organization to its normal production levels as fast as possible. However, IMPs and DRPs aren't the same.

The major difference between an incident management plan and a disaster recovery plan are their primary objectives, which include the following:

- An IMP focuses on protecting sensitive data during an event and defines the scope of actions to be taken during the incident, including the specific roles and responsibilities of the incident response team.

- The goal of a DRP is to minimize the effects of an unexpected incident, recover from it and return the organization to its normal business operations as fast as possible.

- An IMP is an organized response to security incidents that involve detection, analysis, containment, eradication and recovery procedures. It identifies the most likely threats and documents steps to prevent them. A DRP focuses on defining the recovery objectives and the steps that must be taken to bring the organization back to an operational state after an incident occurs.

- An IMP focuses on how a business will detect and manage a cyberattack to reduce potential damage and consequences to the business.

- A DRP addresses the bigger questions surrounding a potential cyberattack, identifying how the business will recover and resume normal work operations after a security incident.

Examples of a disaster recovery plan

An organization can use a disaster recovery plan response for various situations. The following are examples of specific scenarios and the corresponding actions outlined in a disaster recovery plan:

Example 1. Data center failure

Scenario: A data center experiences a power outage or hardware failure.

Response:

- Activate backup generators to ensure continuous power supply.

- Initiate failover to redundant systems or secondary data centers.

- Restore data from backups stored offsite or in the cloud.

Communicate with stakeholders about the status of the situation and expected recovery time.

Example 2. Cyberattack

Scenario: A ransomware attack encrypts critical systems and data of an organization.

Response:

- Isolate affected systems to prevent further spread of the attack.

- Engage cybersecurity experts to identify and mitigate the source of the attack.

- Restore systems from clean backups to minimize data loss and downtime.

- Incorporate additional security measures to prevent future attacks.

Example 3. Human Error or Accidental Data Loss

Scenario: An employee inadvertently deletes important files or database records.

Response:

- Immediately stop any ongoing operations that could exacerbate the problem.

- Attempt to recover the deleted data from backups or shadow copies.

- Use data recovery tools or services to retrieve lost information if necessary.

- Review access controls and permissions to minimize the risk of similar incidents in the future.

Explore essential disaster recovery practices for businesses and learn how to be prepared for both small-scale and large-scale disruptions, intricate emergencies that are frequently overlooked.